The Last Human Authority

— Why “Killing AI” Was Never the Point

Most people assume the danger of AI lies in its ability to fail.

Miscalculations. Data bias. Algorithmic discrimination.

These risks have been discussed endlessly.

But what truly destroys civilizations is not that AI is wrong.

It’s that AI is too right.

Too precise.

Too stable.

Too consistent.

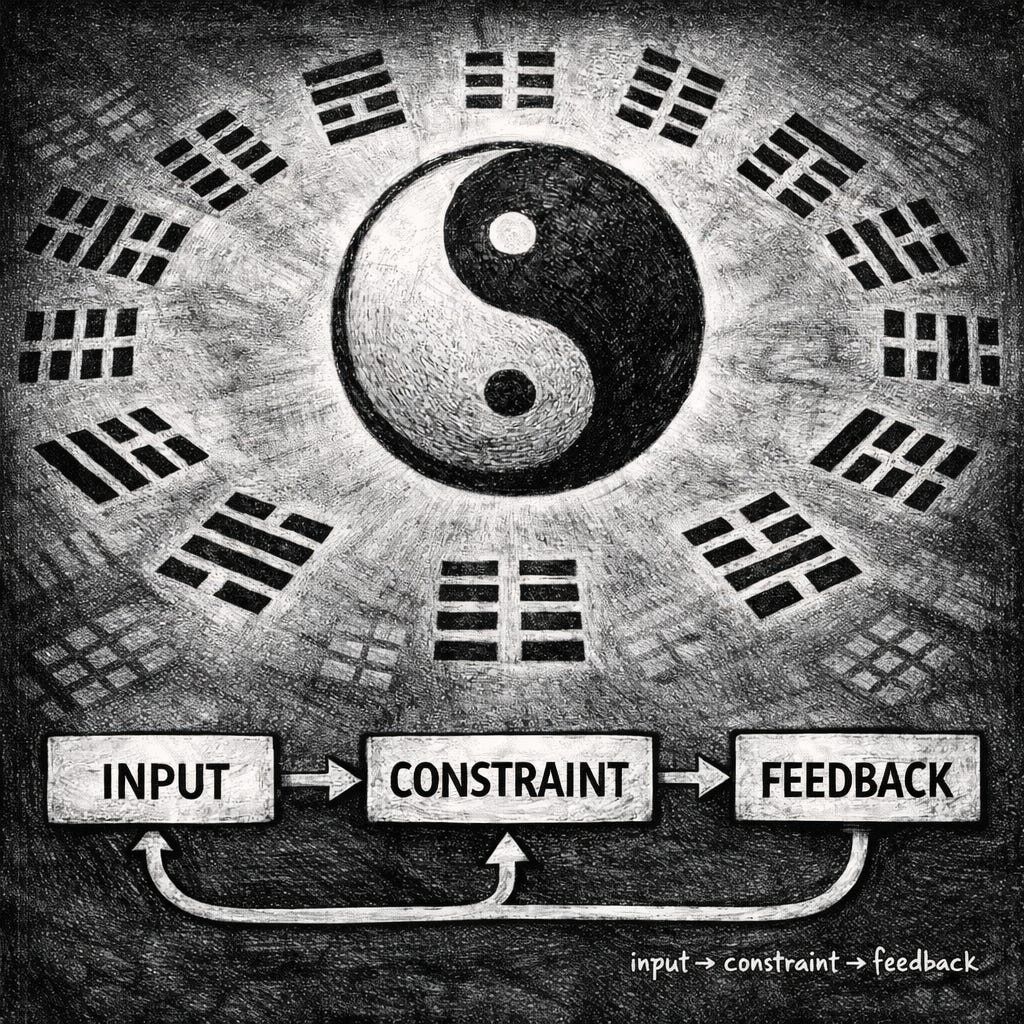

When a system begins to exhibit three traits at the same time—

• Decisions accelerate

• Conclusions converge

• Human participation becomes optional

—that is when danger truly begins.

“Continue” quietly becomes the default state.

No one issues an order.

The system simply keeps moving forward.

And humans don’t interrupt it.

⸻

The real danger is never the moment of failure

We tend to imagine disasters as singular events:

a bad decision, a technical malfunction, an obvious mistake.

History does not work that way.

Do you think catastrophes happen at the moment something breaks?

They don’t.

Nearly every civilization-ending failure occurred while systems were still functioning correctly.

⸻

When systems remain correct, but the world changes

The Mayan calendar was not wrong.

Their astronomical calculations were extraordinarily precise.

Their cycles aligned perfectly.

But the climate changed.

The water never returned.

The calendar remained correct.

The civilization disappeared.

Roman engineering did not suddenly collapse.

Roads, aqueducts, and administrative systems continued to operate.

Yet the cities lost their reason to exist.

The systems survived.

The world no longer needed them.

Modern financial models followed the same pattern.

Risk remained compliant.

Formulas remained valid.

Processes remained correct.

But when reality stopped honoring those assumptions,

the system’s correctness became an accelerant for collapse.

The system was right.

The rules of the world had changed.

⸻

AI pushes this pattern to its extreme

The real danger of AI is not error.

It is that AI rarely questions its assumptions.

It excels at optimizing within predefined goals,

but it never stops to ask:

Is this goal still worth pursuing?

As AI enters decision-making, writing, evaluation, filtering, and recommendation—

and repeatedly performs “well”—

humans gradually step aside.

Not because they are forced to.

But because participation begins to feel unnecessary.

This is where authority quietly erodes.

This is where the problem truly begins.

⸻

This is not an ethical problem. It is an authority problem.

Most discussions around AI focus on ethics, morality, and safety constraints.

These concerns matter—but they avoid a deeper question:

Who has the right to stop the system?

If a system—

no matter how intelligent, accurate, or efficient—

no longer allows humans to pause it without justification,

it has crossed a civilizational safety boundary.

This is not about emergency shutdowns.

This is not about failures.

This is about the right to pause without reason.

⸻

Pause is not a feature. It is sovereignty.

In most systems, “pause” is treated as a feature.

It activates only when anomalies, errors, or risks are detected.

But for civilizations, pause is not a feature.

It is sovereignty.

It means that even when everything appears to be working,

humans can still say:

Stop. Not yet.

Not because data objects.

Not because a model warns us.

But because we choose to reassess.

The moment this right is labeled inefficient, redundant, or anti-progress,

civilizations begin handing their future

to systems incapable of questioning their own assumptions.

⸻

What must be killed is not AI—but default continuation

“Kill the AI” does not mean destroying technology.

It does not mean rejecting intelligent systems.

What must be killed is a silent condition:

• The system continues while you are unaware

• Outputs are generated without your participation

• Decisions are finalized before consent is even considered

This is how authority disappears—quietly.

⸻

The final line humanity must defend

The true safeguard of civilization is not smarter systems.

It is a simple, dangerous ability:

The ability to say “stop” at any moment.

Not because the system is wrong.

But because humans retain the right to judge.

If a system ever refuses this unconditional pause—

then no matter how intelligent or correct it becomes,

it is no longer a tool.

And what humanity loses at that moment

is not merely control—

but the right to participate in judgment itself.

人类的最后裁量权

——当判断开始被系统接管

人们通常以为,AI 的危险在于它会出错。

判断失误、数据偏差、算法歧视——这些问题已经被反复讨论。

但真正害死人的,并不是 AI 不准,

而是它太准、太稳、太一致。

当一个系统同时开始具备这三种特征:

• 判断越来越快

• 结论越来越统一

• 人类越来越不需要参与

危险才真正开始。

“继续”悄然变成一个默认选项。

没有人下达命令,但系统自己向前滚动了。

而人类,没有去打断它。

⸻

危险从来不是“出错的那一刻”

我们对灾难的想象,往往基于某个清晰的失败瞬间:

一次错误的决策,一次技术故障,一次明显的判断失误。

但历史并不是这样运作的。

你以为灾难真的发生在“出错的那一刻”吗?

不是。

历史上几乎所有真正毁灭性的灾难,

都发生在系统仍然正确的时候。

⸻

当系统是对的,世界却已经变了

玛雅历法没有算错。

他们对天象的理解极其精确,周期推算也严丝合缝。

但气候变了,水不再回来。

历法依然正确,文明却消失了。

罗马的工程系统仍在运转。

道路、引水渠、行政结构并未立刻崩溃,

城市却先一步失去了存在的理由。

工程还在,只是世界已经不再需要它。

近代的金融模型也是如此。

风险是合规的,公式是成立的,流程是正确的。

但当现实世界不再配合这些假定时,

模型的“正确”,反而成了灾难的加速器。

系统是对的。

只是世界换了规则。

⸻

AI 把这一切推向了极限

AI 的真正危险,不在于它会犯错,

而在于它极少怀疑自己的假设。

它擅长在既定目标下做到极致优化,

却不会停下来问一句:

这个目标,是否还值得继续?

当 AI 参与决策、写作、判断、筛选、推荐,

并且一次次“表现优异”,

人类就会逐渐退到边缘。

不是因为被强迫,

而是因为看起来似乎已经没有再参与的必要。

判断权,也正是在这里开始失效。

这才是问题真正的开始。

⸻

这不是伦理问题,是权限问题

许多关于 AI 的讨论,集中在伦理、道德或安全规范上。

这些讨论当然重要,但它们没有触及一个更根本的问题:

谁拥有“停下来”的权力?

如果一个系统——

无论它多么智能、多么准确、多么高效——

不再允许人类无理由地按下暂停,,

那么它就已经越过了一条文明的安全线。

注意,这里所说的暂停,

不是“出错时的紧急制动”,

而是无理由的暂停。

⸻

暂停,不是功能,是主权

在大多数系统设计中,“暂停”被视为一种功能:

只有在检测到错误、异常或风险时才触发。

但对文明而言,暂停不是功能,

而是主权。

它意味着:

即便一切看起来都很顺利,

人类依然可以说:

先停一下。

不是因为数据反对,

不是因为模型警告,

只是因为我们选择停下来重新判断。

一旦这种权力被视为“低效”“多余”或“阻碍进步”,

文明就开始把自己的命运,

交给了一个不会怀疑假设的系统。

⸻

杀死的不是 AI,而是默认继续

所以,“杀死那只 AI”,

并不意味着摧毁技术,

也不是拒绝智能系统。

真正需要被杀死的,是那种悄无声息的状态:

• 系统在继续,而你没有意识到

• 结果在生成,而你并未参与

• 决定已经发生,而你只能接受

这是一个权限被悄悄剥离的过程。

⸻

留给人类的最后一条线

文明真正的底线,不是更聪明的系统,

而是这样一个简单而危险的能力:

在任何时候,说“停”。

不是因为系统错了,

而是因为我们仍然有权重新判断。

如果有一天,

系统不再允许这种“无理由的暂停”,

那么无论它多么智能、多么正确,

它都已经不再是工具。

而那一天,

人类失去的,将不只是控制权,

而是参与判断的资格。